- Preface

- 1. Getting Started

- 2. Basic Concepts

- 2.1. Introduction

- 2.2. Basic Select

- 2.3. Basic Aggregation

- 2.4. Basic Filter

- 2.5. Basic Filter and Aggregation

- 2.6. Basic Data Window

- 2.7. Basic Data Window and Aggregation

- 2.8. Basic Filter, Data Window and Aggregation

- 2.9. Basic Where-Clause

- 2.10. Basic Time Window and Aggregation

- 2.11. Basic Partitioned Statement

- 2.12. Basic Output-Rate-Limited Statement

- 2.13. Basic Partitioned and Output-Rate-Limited Statement

- 2.14. Basic Named Windows and Tables

- 2.15. Basic Aggregated Statement Types

- 2.16. Basic Match-Recognize Patterns

- 2.17. Basic EPL Patterns

- 2.18. Basic Indexes

- 2.19. Basic Null

- 3. Event Representations

- 3.1. Event Underlying Java Objects

- 3.2. Event Properties

- 3.3. Dynamic Event Properties

- 3.4. Fragment and Fragment Type

- 3.5. Comparing Event Representations

- 3.6. Support for Generic Tuples

- 3.7. Updating, Merging and Versioning Events

- 3.8. Coarse-Grained Events

- 3.9. Event Objects Instantiated and Populated by Insert Into

- 3.10. Event Type Uniqueness

- 4. Context and Context Partitions

- 4.1. Introduction

- 4.2. Context Declaration

- 4.3. Context Nesting

- 4.4. Partitioning Without Context Declaration

- 4.5. Output When a Context Partition Starts (Non-Overlapping Context) or Initiates (Overlapping Context)

- 4.6. Output When a Context Partition Ends (Non-Overlapping Context) or Terminates (Overlapping Context)

- 4.7. Context and Named Window

- 4.8. Context and Tables

- 4.9. Context and Variables

- 4.10. Operations on Specific Context Partitions

- 5. EPL Reference: Clauses

- 5.1. EPL Introduction

- 5.2. EPL Syntax

- 5.2.1. Specifying Time Periods

- 5.2.2. Using Comments

- 5.2.3. Reserved Keywords

- 5.2.4. Escaping Strings

- 5.2.5. Data Types

- 5.2.6. Using Constants and Enum Types

- 5.2.7. Annotation

- 5.2.8. Expression Alias

- 5.2.9. Expression Declaration

- 5.2.10. Inlined-Class Declaration

- 5.2.11. Script Declaration

- 5.2.12. Referring to a Context

- 5.2.13. Composite Keys and Array Values as Keys

- 5.3. Choosing Event Properties and Events: The Select Clause

- 5.3.1. Choosing the Event Itself: Select *

- 5.3.2. Choosing Specific Event Properties

- 5.3.3. Expressions

- 5.3.4. Renaming Event Properties

- 5.3.5. Choosing Event Properties and Events in a Join

- 5.3.6. Choosing Event Properties and Events From a Pattern

- 5.3.7. Selecting Insert and Remove Stream Events

- 5.3.8. Select Distinct

- 5.3.9. Transposing an Expression Result to a Stream

- 5.3.10. Selecting EventBean Instead of Underlying Event

- 5.4. Specifying Event Streams: The From Clause

- 5.5. Specifying Search Conditions: The Where Clause

- 5.6. Aggregates and Grouping: The Group-By Clause and the Having Clause

- 5.6.1. Using Aggregate Functions

- 5.6.2. Organizing Statement Results into Groups: The Group-by Clause

- 5.6.3. Using Group-By with Rollup, Cube and Grouping Sets

- 5.6.4. Specifying Grouping for Each Aggregation Function

- 5.6.5. Specifying a Filter Expression for Each Aggregation Function

- 5.6.6. Selecting Groups of Events: The Having Clause

- 5.6.7. How the Stream Filter, Where, Group By and Having-Clauses Interact

- 5.6.8. Comparing Keyed Segmented Context, the Group By Clause and #groupwin for Data Windows

- 5.7. Stabilizing and Controlling Output: The Output Clause

- 5.8. Sorting Output: the Order By Clause

- 5.9. Limiting Row Count: the Limit Clause

- 5.10. Merging Streams and Continuous Insertion: The Insert Into Clause

- 5.10.1. Transposing a Property to a Stream

- 5.10.2. Merging Streams by Event Type

- 5.10.3. Merging Disparate Types of Events: Variant Streams

- 5.10.4. Decorated Events

- 5.10.5. Event as a Property

- 5.10.6. Instantiating and Populating an Underlying Event Object

- 5.10.7. Transposing an Expression Result

- 5.10.8. Select-Clause Expression and Inserted-Into Column Event Type

- 5.10.9. Insert Into for Event Types Without Properties

- 5.10.10. Insert Into and Event Precedence

- 5.11. Subqueries

- 5.12. Joining Event Streams

- 5.13. Accessing Relational Data via SQL

- 5.13.1. Joining SQL Query Results

- 5.13.2. SQL Query and the EPL Where Clause

- 5.13.3. Outer Joins With SQL Queries

- 5.13.4. Using Patterns to Request (Poll) Data

- 5.13.5. Polling SQL Queries via Iterator

- 5.13.6. JDBC Implementation Overview

- 5.13.7. Oracle Drivers and No-Metadata Workaround

- 5.13.8. SQL Input Parameter and Column Output Conversion

- 5.13.9. SQL Row POJO Conversion

- 5.13.10. Executing SQL Fire-and-Forget Queries Using EPFireAndForgetService

- 5.14. Accessing Non-Relational Data via Method, Script or UDF Invocation

- 5.15. Declaring an Event Type: Create Schema

- 5.16. Splitting and Duplicating Streams

- 5.17. Variables and Constants

- 5.18. Declaring Global Expressions, Aliases and Scripts: Create Expression

- 5.19. Contained-Event Selection

- 5.19.1. Select-Clause in a Contained-Event Selection

- 5.19.2. Where Clause in a Contained-Event Selection

- 5.19.3. Contained-Event Selection and Joins

- 5.19.4. Sentence and Word Example

- 5.19.5. More Examples

- 5.19.6. Contained Expression Returning an Array of Property Values

- 5.19.7. Contained Expression Returning an Array of EventBean

- 5.19.8. Contained Expression Returning a Collection of Underlying Event Objects

- 5.19.9. Generating Marker Events Such as a Begin and End Event

- 5.19.10. Contained-Event Limitations

- 5.20. Updating an Insert Stream: The Update IStream Clause

- 5.21. Controlling Event Delivery : The For Clause

- 6. EPL Reference: Named Windows and Tables

- 6.1. Overview

- 6.2. Named Window Usage

- 6.3. Table Usage

- 6.3.1. Creating Tables: The Create Table Clause

- 6.3.2. Aggregating Into Table Rows: The Into Table Clause

- 6.3.3. Table Column Keyed-Access Expressions

- 6.3.4. Inserting Into Tables

- 6.3.5. Selecting From Tables

- 6.3.6. Resetting Table Columns and Aggregation State

- 6.3.7. Initializing Table Columns and Aggregation State

- 6.4. Triggered Select: The On Select Clause

- 6.5. Triggered Select+Delete: The On Select Delete Clause

- 6.6. Updating Data: The On Update Clause

- 6.7. Deleting Data: The On Delete Clause

- 6.8. Triggered Upsert Using the On-Merge Clause

- 6.9. Explicitly Indexing Named Windows and Tables

- 6.10. Using Fire-and-Forget Queries With Named Windows and Tables

- 6.11. Events as Property

- 7. EPL Reference: Patterns

- 8. EPL Reference: Match Recognize

- 8.1. Overview

- 8.2. Comparison of Match Recognize and EPL Patterns

- 8.3. Syntax

- 8.4. Pattern and Pattern Operators

- 8.4.1. Operator Precedence

- 8.4.2. Concatenation

- 8.4.3. Alternation

- 8.4.4. Quantifiers Overview

- 8.4.5. Permutations

- 8.4.6. Variables Can Be Singleton or Group

- 8.4.7. Eliminating Duplicate Matches

- 8.4.8. Greedy or Reluctant

- 8.4.9. Quantifier - One or More (+ and +?)

- 8.4.10. Quantifier - Zero or More (* and *?)

- 8.4.11. Quantifier - Zero or One (? and ??)

- 8.4.12. Repetition - Exactly N Matches

- 8.4.13. Repetition - N or More Matches

- 8.4.14. Repetition - Between N and M Matches

- 8.4.15. Repetition - Between Zero and M Matches

- 8.4.16. Repetition Equivalence

- 8.5. Define Clause

- 8.6. Measure Clause

- 8.7. Datawindow-Bound

- 8.8. Interval

- 8.9. Interval-or-Terminated

- 8.10. Use With Different Event Types

- 8.11. Limiting Runtime-Wide State Count

- 8.12. Limitations

- 9. EPL Reference: Operators

- 9.1. Arithmetic Operators

- 9.2. Logical and Comparison Operators

- 9.3. Concatenation Operators

- 9.4. Binary Operators

- 9.5. Array Definition Operator

- 9.6. Array Element Operator

- 9.7. Dot Operator

- 9.8. The 'In' Keyword

- 9.9. The 'Between' Keyword

- 9.10. The 'Like' Keyword

- 9.11. The 'Regexp' Keyword

- 9.12. The 'Any' and 'Some' Keywords

- 9.13. The 'All' Keyword

- 9.14. The 'New' Keyword

- 10. EPL Reference: Functions

- 10.1. Single-Row Function Reference

- 10.1.1. The Case Control Flow Function

- 10.1.2. The Cast Function

- 10.1.3. The Coalesce Function

- 10.1.4. The Current_Evaluation_Context Function

- 10.1.5. The Current_Timestamp Function

- 10.1.6. The Event_Identity_Equals Function

- 10.1.7. The Exists Function

- 10.1.8. The Grouping Function

- 10.1.9. The Grouping_Id Function

- 10.1.10. The Instance-Of Function

- 10.1.11. The Istream Function

- 10.1.12. The Min and Max Functions

- 10.1.13. The Previous Function

- 10.1.14. The Previous-Tail Function

- 10.1.15. The Previous-Window Function

- 10.1.16. The Previous-Count Function

- 10.1.17. The Prior Function

- 10.1.18. The Type-Of Function

- 10.2. Aggregation Functions

- 10.3. User-Defined Functions

- 10.4. Select-Clause Transpose Function

- 11. EPL Reference: Enumeration Methods

- 11.1. Overview

- 11.2. Example Events

- 11.3. How to Use

- 11.4. Inputs

- 11.4.1. Subquery Results

- 11.4.2. Named Window

- 11.4.3. Table

- 11.4.4. Event Property and Insert-Into With @eventbean

- 11.4.5. Event Aggregation Function

- 11.4.6. Prev, Prevwindow and Prevtail Single-Row Functions as Input

- 11.4.7. Single-Row Function, User-Defined Function and Enum Types

- 11.4.8. Declared Expression

- 11.4.9. Variables

- 11.4.10. Substitution Parameters

- 11.4.11. Match-Recognize Group Variable

- 11.4.12. Pattern Repeat and Repeat-Until Operators

- 11.5. Example

- 11.6. Reference

- 11.6.1. Aggregate

- 11.6.2. AllOf

- 11.6.3. AnyOf

- 11.6.4. ArrayOf

- 11.6.5. Average

- 11.6.6. CountOf

- 11.6.7. DistinctOf

- 11.6.8. Except

- 11.6.9. FirstOf

- 11.6.10. GroupBy

- 11.6.11. Intersect

- 11.6.12. LastOf

- 11.6.13. LeastFrequent

- 11.6.14. Max

- 11.6.15. MaxBy

- 11.6.16. Min

- 11.6.17. MinBy

- 11.6.18. MostFrequent

- 11.6.19. OrderBy and OrderByDesc

- 11.6.20. Reverse

- 11.6.21. SelectFrom

- 11.6.22. SequenceEqual

- 11.6.23. SumOf

- 11.6.24. Take

- 11.6.25. TakeLast

- 11.6.26. TakeWhile

- 11.6.27. TakeWhileLast

- 11.6.28. ToMap

- 11.6.29. Union

- 11.6.30. Where

- 12. EPL Reference: Date-Time Methods

- 12.1. Overview

- 12.2. How to Use

- 12.3. Calendar and Formatting Reference

- 12.4. Interval Algebra Reference

- 12.4.1. Examples

- 12.4.2. Interval Algebra Parameters

- 12.4.3. Performance

- 12.4.4. Limitations

- 12.4.5. After

- 12.4.6. Before

- 12.4.7. Coincides

- 12.4.8. During

- 12.4.9. Finishes

- 12.4.10. Finished By

- 12.4.11. Includes

- 12.4.12. Meets

- 12.4.13. Met By

- 12.4.14. Overlaps

- 12.4.15. Overlapped By

- 12.4.16. Starts

- 12.4.17. Started By

- 13. EPL Reference: Aggregation Methods

- 13.1. Overview

- 13.2. How to Use

- 13.3. Aggregation Methods for Sorted Aggregations

- 13.3.1. Overview

- 13.3.2. Specifying Composite Keys

- 13.3.3. CeilingEvent, FloorEvent, HigherEvent, LowerEvent, GetEvent

- 13.3.4. CeilingEvents, FloorEvents, HigherEvents, LowerEvents, GetEvents

- 13.3.5. CeilingKey, FloorKey, HigherKey, LowerKey

- 13.3.6. FirstEvent, LastEvent, MinBy, MaxBy

- 13.3.7. FirstEvents, LastEvents

- 13.3.8. FirstKey, LastKey

- 13.3.9. ContainsKey

- 13.3.10. CountEvents

- 13.3.11. CountKeys

- 13.3.12. EventsBetween

- 13.3.13. Submap

- 13.3.14. NavigableMapReference

- 13.4. Aggregation Methods for Window Aggregations

- 13.5. Aggregation Methods for CountMinSketch Aggregations

- 13.6. Aggregation Methods for Custom Plug-In Multi-Function Aggregations

- 14. EPL Reference: Data Windows

- 14.1. A Note on Data Window Name and Parameters

- 14.2. A Note on Batch Windows

- 14.3. Data Windows

- 14.3.1. Length Window (length or win:length)

- 14.3.2. Length Batch Window (length_batch or win:length_batch)

- 14.3.3. Time Window (time or win:time)

- 14.3.4. Externally-timed Window (ext_timed or win:ext_timed)

- 14.3.5. Time batch Window (time_batch or win:time_batch)

- 14.3.6. Externally-timed Batch Window (ext_timed_batch or win:ext_timed_batch)

- 14.3.7. Time-Length Combination Batch Window (time_length_batch or win:time_length_batch)

- 14.3.8. Time-Accumulating Window (time_accum or win:time_accum)

- 14.3.9. Keep-All Window (keepall or win:keepall)

- 14.3.10. First Length Window(firstlength or win:firstlength)

- 14.3.11. First Time Window (firsttime or win:firsttime)

- 14.3.12. Expiry Expression Window (expr or win:expr)

- 14.3.13. Expiry Expression Batch Window (expr_batch or win:expr_batch)

- 14.3.14. Unique Window (unique or std:unique)

- 14.3.15. Grouped Data Window (groupwin or std:groupwin)

- 14.3.16. Last Event Window (std:lastevent)

- 14.3.17. First Event Window (firstevent or std:firstevent)

- 14.3.18. First Unique Window (firstunique or std:firstunique)

- 14.3.19. Sorted Window (sort or ext:sort)

- 14.3.20. Ranked Window (rank or ext:rank)

- 14.3.21. Time-Order Window (time_order or ext:time_order)

- 14.3.22. Time-To-Live Window (timetolive or ext:timetolive)

- 14.4. Special Derived-Value Windows

- 14.4.1. Size Derived-Value Window (size) or std:size)

- 14.4.2. Univariate Statistics Derived-Value Window (uni or stat:uni)

- 14.4.3. Regression Derived-Value Window (linest or stat:linest)

- 14.4.4. Correlation Derived-Value Window (correl or stat:correl)

- 14.4.5. Weighted Average Derived-Value Window (weighted_avg or stat:weighted_avg)

- 15. Compiler Reference

- 15.1. Introduction

- 15.2. Concepts

- 15.3. Compiling a Module

- 15.4. Reading and Writing a Compiled Module

- 15.5. Reading Module Content

- 15.6. Compiler Arguments

- 15.7. Statement Object Model

- 15.8. Substitution Parameters

- 15.9. OSGi, Class Loader, Class-For-Name

- 15.10. Authoring Tools

- 15.11. Testing Tools

- 15.12. Debugging

- 15.13. Ordering Multiple Modules

- 15.14. Logging

- 15.15. Debugging Generated Code

- 15.16. Compiler Version and Runtime Version

- 15.17. Compiler Byte Code Optimizations

- 15.18. Compiler Filter Expression Analysis

- 15.19. Limitations

- 16. Runtime Reference

- 16.1. Introduction

- 16.2. Obtaining a Runtime From EPRuntimeProvider

- 16.3. The EPRuntime Runtime Interface

- 16.4. Deploying and Undeploying Using EPDeploymentService

- 16.5. Obtaining Results Using EPStatement

- 16.6. Processing Events and Time Using EPEventService

- 16.7. Execute Fire-and-Forget Queries Using EPFireAndForgetService

- 16.8. Runtime Threading and Concurrency

- 16.9. Controlling Time-Keeping

- 16.10. Exception Handling

- 16.11. Condition Handling

- 16.12. Runtime and Statement Metrics Reporting

- 16.13. Monitoring and JMX

- 16.14. Event Rendering to XML and JSON

- 16.15. Plug-In Loader

- 16.16. Context Partition Selection

- 16.17. Context Partition Administration

- 16.18. Test and Assertion Support

- 16.19. OSGi, Class Loader, Class-For-Name

- 16.20. When Deploying with J2EE

- 16.21. Stages

- 17. Configuration

- 17.1. Overview

- 17.2. Programmatic Configuration

- 17.3. Configuration via XML File

- 17.4. Configuration Common

- 17.4.1. Annotation Class and Package Imports

- 17.4.2. Class and Package Imports

- 17.4.3. Events Represented by Classes

- 17.4.4. Events Represented by java.util.Map

- 17.4.5. Events Represented by Object[] (Object-array)

- 17.4.6. Events Represented by JSON

- 17.4.7. Events Represented by Avro GenericData.Record

- 17.4.8. Events Represented by org.w3c.dom.Node

- 17.4.9. Event Type Defaults

- 17.4.10. Event Type Import Package (Event Type Auto-Name)

- 17.4.11. From-Clause Method Invocation

- 17.4.12. Relational Database Access

- 17.4.13. Common Settings Related to Logging

- 17.4.14. Common Settings Related to Time Source

- 17.4.15. Variables

- 17.4.16. Variant Stream

- 17.5. Configuration Compiler

- 17.5.1. Compiler Settings Related to Byte Code Generation

- 17.5.2. Compiler Settings Related to View Resources

- 17.5.3. Compiler Settings Related to Logging

- 17.5.4. Compiler Settings Related to Stream Selection

- 17.5.5. Compiler Settings Related to Language and Locale

- 17.5.6. Compiler Settings Related to Expression Evaluation

- 17.5.7. Compiler Settings Related to Scripts

- 17.5.8. Compiler Settings Related to Execution of Statements

- 17.5.9. Compiler Settings Related to Serializers and Deserializers

- 17.6. Configuration Runtime

- 17.6.1. Runtime Settings Related to Concurrency and Threading

- 17.6.2. Runtime Settings Related to Logging

- 17.6.3. Runtime Settings Related to Variables

- 17.6.4. Runtime Settings Related to Patterns

- 17.6.5. Runtime Settings Related to Match-Recognize

- 17.6.6. Runtime Settings Related to Time Source

- 17.6.7. Runtime Settings Related to JMX Metrics

- 17.6.8. Runtime Settings Related to Metrics Reporting

- 17.6.9. Runtime Settings Related to Expression Evaluation

- 17.6.10. Runtime Settings Related to Execution of Statements

- 17.6.11. Runtime Settings Related to Exception Handling

- 17.6.12. Runtime Settings Related to Condition Handling

- 17.7. Passing Services or Transient Objects

- 17.8. Type Names

- 17.9. Logging Configuration

- 18. Inlined Classes

- 19. Script Support

- 20. EPL Reference: Spatial Methods and Indexes

- 20.1. Overview

- 20.2. Spatial Methods

- 20.3. Spatial Index - Quadtree

- 20.3.1. Overview

- 20.3.2. Declaring a Point-Region Quadtree Index

- 20.3.3. Using a Point-Region Quadtree as a Filter Index

- 20.3.4. Using a Point-Region Quadtree as an Event Index

- 20.3.5. Declaring a MX-CIF Quadtree Index

- 20.3.6. Using a MX-CIF Quadtree as a Filter Index

- 20.3.7. Using a MX-CIF Quadtree as an Event Index

- 20.4. Spatial Types, Functions and Methods from External Libraries

- 21. EPL Reference: Data Flow

- 22. Integration and Extension

- 22.1. Overview

- 22.2. Single-Row Function

- 22.2.1. Using an Inlined Class to Provide a Single-Row Function

- 22.2.2. Using an Application Class to Provide a Single-Row Function

- 22.2.3. Value Cache

- 22.2.4. Single-Row Functions in Filter Predicate Expressions

- 22.2.5. Single-Row Functions Taking Events as Parameters

- 22.2.6. Single-Row Functions Returning Events

- 22.2.7. Receiving a Context Object

- 22.2.8. Exception Handling

- 22.3. Virtual Data Window

- 22.4. Data Window View and Derived-Value View

- 22.5. Aggregation Function

- 22.6. Pattern Guard

- 22.7. Pattern Observer

- 22.8. Date-Time Method

- 22.9. Enumeration Method

- 22.9.1. Implement the EnumMethodForgeFactory Interface

- 22.9.2. Implement the EnumMethodState Interface

- 22.9.3. Implement the Static Method for Processing

- 22.9.4. Add the Enumeration Method Extension to the Compiler Configuration

- 22.9.5. Use the new Enumeration Method

- 22.9.6. Further Information to Lambda Parameters

- 23. Examples, Tutorials, Case Studies

- 23.1. Examples Overview

- 23.2. Running the Examples

- 23.3. AutoID RFID Reader

- 23.4. Runtime Configuration

- 23.5. JMS Server Shell and Client

- 23.6. Market Data Feed Monitor

- 23.7. OHLC Plug-In Data Window

- 23.8. Transaction 3-Event Challenge

- 23.9. Self-Service Terminal

- 23.10. Assets Moving Across Zones - An RFID Example

- 23.11. StockTicker

- 23.12. MatchMaker

- 23.13. Named Window Query

- 23.14. Sample Virtual Data Window

- 23.15. Sample Cycle Detection

- 23.16. Quality of Service

- 23.17. Trivia Geeks Club

- 24. Performance

- 24.1. Big O Notation

- 24.1.1. Big-O Complexity of Matching Events to Statements and Context Partitions

- 24.1.2. Big-O Complexity of Matching Time to Statements and Context Partitions

- 24.1.3. Big-O Complexity of Joins, Subqueries, On-Select, On-Merge, On-Update, On-Delete

- 24.1.4. Big-O Complexity of Enumeration Methods

- 24.1.5. Big-O Complexity of Aggregation Methods

- 24.2. Performance Tips

- 24.2.1. Understand How to Tune Your Java Virtual Machine

- 24.2.2. Input and Output Bottlenecks

- 24.2.3. Threading

- 24.2.4. Select the Underlying Event Rather Than Individual Fields

- 24.2.5. Prefer Stream-Level Filtering Over Where-Clause Filtering

- 24.2.6. Reduce the Use of Arithmetic in Expressions

- 24.2.7. Remove Unneccessary Constructs

- 24.2.8. End Pattern Sub-Expressions

- 24.2.9. Consider Using EventPropertyGetter for Fast Access to Event Properties

- 24.2.10. Consider Casting the Underlying Event

- 24.2.11. Turn Off Logging and Audit

- 24.2.12. Tune or Disable Delivery Order Guarantees

- 24.2.13. Use a Subscriber Object to Receive Events

- 24.2.14. Consider Data Flows

- 24.2.15. High-Arrival-Rate Streams and Single Statements

- 24.2.16. Subqueries Versus Joins and Where-Clause and Data Windows

- 24.2.17. Patterns and Pattern Sub-Expression Instances

- 24.2.18. Pattern Sub-Expression Instance Versus Data Window Use

- 24.2.19. The Keep-All Data Window

- 24.2.20. Statement Design for Reduced Memory Consumption - Diagnosing OutOfMemoryError

- 24.2.21. Performance, JVM, OS and Hardware

- 24.2.22. Consider Using Hints

- 24.2.23. Optimizing Stream Filter Expressions

- 24.2.24. Statement and Runtime Metric Reporting

- 24.2.25. Expression Evaluation Order and Early Exit

- 24.2.26. Large Number of Threads

- 24.2.27. Filter Evaluation Tuning

- 24.2.28. Context Partition Related Information

- 24.2.29. Prefer Constant Variables Over Non-Constant Variables

- 24.2.30. Prefer POJO Events or alternatively Object-Array Events

- 24.2.31. Notes on Query Planning

- 24.2.32. Query Planning Expression Analysis Hints

- 24.2.33. Query Planning Index Hints

- 24.2.34. Measuring Throughput

- 24.2.35. Do Not Create the Same or Similar Statement X Times

- 24.2.36. Comparing Single-Threaded and Multi-Threaded Performance

- 24.2.37. Incremental Versus Recomputed Aggregation for Named Window Events

- 24.2.38. When Does Memory Get Released

- 24.2.39. Measure Throughput of Non-Matches as Well as Watches

- 24.2.40. Options for When an Event Type has a Large Number of Event Properties i.e. Large Events

- 24.3. Using the Performance Kit

- 25. References

- A. Output Reference and Samples

- A.1. Introduction and Sample Data

- A.2. Output for Un-Aggregated and Un-Grouped Statements

- A.3. Output for Fully-Aggregated and Un-Grouped Statements

- A.4. Output for Aggregated and Un-Grouped Statements

- A.5. Output for Fully-Aggregated and Grouped Statements

- A.6. Output for Aggregated and Grouped Statements

- A.7. Output for Fully-Aggregated, Grouped Statements With Rollup

- B. Runtime Considerations for Output Rate Limiting

- C. Reserved Keywords

- D. Event Representation: Plain-Old Java Object Events

- E. Event Representation: java.util.Map Events

- F. Event Representation: Object-Array (Object[]) Events

- G. Event Representation: JSON Events

- G.1. Overview

- G.2. JSON Event Type

- G.3. JSON Object Nesting

- G.4. JSON Supported Types

- G.5. JSON Application-Provided Class

- G.6. JSON Dynamic Event Properties

- G.7. API for Parsing JSON Documents

- G.8. API for Building JSON Documents

- G.9. Customizing JSON Serializing and Deserializing

- G.10. Customizing the JSON Event Class

- G.11. Limitations

- H. Event Representation: Avro Events (org.apache.avro.generic.GenericData.Record)

- H.1. Overview

- H.2. Avro Event Type

- H.3. Avro Schema Name Requirement

- H.4. Avro Field Schema to Property Type Mapping

- H.5. Primitive Data Type and Class to Avro Schema Mapping

- H.6. Customizing Avro Schema Assignment

- H.7. Customizing Class-to-Avro Schema

- H.8. Customizing Object-to-Avro Field Value Assignment

- H.9. API Examples

- H.10. Limitations

- I. Event Representation: org.w3c.dom.Node XML Events

- J. NEsper .NET -Specific Information

- J.1. .NET General Information

- J.2. .NET and Annotations

- J.3. .NET and Locks and Concurrency

- J.4. .NET and Threading

- J.5. .NET and Timer

- J.6. .NET NEsper Configuration

- J.7. .NET Event Underlying Objects

- J.8. .NET Object Events

- J.9. .NET IDictionary Events

- J.10. .NET XML Events

- J.11. .NET Event Objects Instantiated and Populated by Insert Into

- J.12. .NET Basic Concepts

- J.13. .NET EPL Syntax - Data Types

- J.14. .NET Accessing Relational Data via SQL

- J.15. .NET API - Receiving Statement Results

- J.16. .NET API - Adding Listeners

- J.17. .NET Configurations - Relational Database Access

- J.18. .NET Configurations - Logging Configuration

- Index

Analyzing and reacting to information in real-time oftentimes requires the development of custom applications. Typically these applications must obtain the data to analyze, filter data, derive information and then indicate this information through some form of presentation or communication. Data may arrive with high frequency requiring high throughput processing. And applications may need to be flexible and react to changes in requirements while the data is processed. Esper is an event stream processor that aims to enable a short development cycle from inception to production for these types of applications.

This document is a resource for software developers who develop event driven applications. It also contains information that is useful for business analysts and system architects who are evaluating Esper.

It is assumed that the reader is familiar with the Java programming language.

For NEsper .NET the reader is is familiar with the C# programming language. For NEsper .NET, please also review Appendix J, NEsper .NET -Specific Information.

This document is relevant in all phases of your software development project: from design to deployment and support.

If you are new to Esper, please follow these steps:

Read the tutorials, case studies and solution patterns available on the Esper public web site at

http://www.espertech.com/esperRead Chapter 1, Getting Started if you are new to CEP and streaming analytics

Read Chapter 2, Basic Concepts to gain insight into EPL basic concepts

Read Chapter 3, Event Representations that explains the different ways of representing events to Esper

Read Section 5.1, “EPL Introduction” for an introduction to event stream processing via EPL

Read Section 7.1, “Event Pattern Overview” for an overview over event patterns

Read Section 8.1, “Overview” for an overview over event patterns using the match recognize syntax.

Then glance over the examples Section 23.1, “Examples Overview”

Finally to test drive Esper performance, read Chapter 24, Performance

The Esper compiler and runtime have been developed to address the requirements of applications that analyze and react to events. Some typical examples of applications are:

Business process management and automation (process monitoring, BAM, reporting exceptions)

Finance (algorithmic trading, fraud detection, risk management)

Network and application monitoring (intrusion detection, SLA monitoring)

Sensor network applications (RFID reading, scheduling and control of fabrication lines, air traffic)

What these applications have in common is the requirement to process events (or messages) in real-time or near real-time. This is sometimes referred to as complex event processing (CEP) and event series analysis. Key considerations for these types of applications are throughput, latency and the complexity of the logic required.

High throughput - applications that process large volumes of messages (between 1,000 to 100k messages per second)

Low latency - applications that react in real-time to conditions that occur (from a few milliseconds to a few seconds)

Complex computations - applications that detect patterns among events (event correlation), filter events, aggregate time or length windows of events, join event series, trigger based on absence of events etc.

The EPL compiler and runtime were designed to make it easier to build and extend CEP applications.

More information on CEP can be found at FAQ.

Esper is a language, a language compiler and a runtime environment.

The Esper language is the Event Processing Language (EPL). It is a declarative, data-oriented language for dealing with high frequency time-based event data. EPL is compliant to the SQL-92 standard and extended for analyzing series of events and in respect to time.

The Esper compiler compiles EPL source code into Java Virtual Machine (JVM) bytecode so that the resulting executable code runs on a JVM within the Esper runtime environment.

The Esper runtime runs on top of a JVM. You can run byte code produced by the Esper compiler using the Esper runtime.

The Esper architecture is similar to that of other programming languages that are compiled to JVM bytecode, such as Scala, Clojure and Kotlin for example. Esper EPL however is not an imperative (procedural) programming language.

The Esper language is the Event Processing Language (EPL) designed for Complex Event Processing and Streaming Analytics.

EPL is organized into modules. Modules are compiled into byte code by the compiler. We use the term module for an EPL source code unit.

A module consists of statements. Statements are the declarative code for performing event and time analysis. Most statements are in the form of "select ... from ...".

We use the term statement for each unit of declarative code that makes up a module.

Your application receives output from statements via callback or by iterating current results of a statement.

A statement can declare an EPL-object such as listed below:

Event types define stream type information and are added using

create schemaor by configuration.Variables are free-form value holders and are added using

create variableor by configuration.Named windows are sharable named data windows and are added using

create window.Tables are sharable organized rows with columns that are simple, aggregation and complex types, and are added using

create table.Contexts define analysis lifecycle and are added using

create context.Expressions and Scripts are reusable expressions and are added using

create expression.Indexes organize named window events and table rows for fast lookup and are added using

create index.

Use access modifiers such as private, protected and public to control access to EPL-objects.

A module can optionally have a module name. The module name has a similar use as the package name or namespace name in a programming language. A module name is used to organize EPL objects and to avoid name conflicts.

When deploying a compiled module the runtime assigns a deployment id to the deployment. The deployment id uniquely identifies a given deployment of a compiled module. A compiled module can be parameterized and deployed multiple times.

A statement always has a statement name. The statement name identifies a statement within a deployed module and is unique within a deployment. The combination of deployment id and statement name uniquely identifies a statement within a runtime.

EPL is type-safe in that EPL does not allow performing an operation on an object that is invalid for that object.

Please add the Esper compiler jar file, the common jar file and the compiler dependencies to the classpath of the program that will be compiling EPL.

The jar files listed here are not required for the runtime except for esper-common-version.jar.

Common jar file

esper-common-version.jarCompiler jar file

esper-compiler-version.jarANTLR parser jar file

antlr4-runtime-4.7.2.jarSLF4J logging library

slf4j-api-1.7.26.jarJanino Java compiler

janino-3.1.0.jarandcommons-compiler-3.1.0.jar

Optionally, for logging using Log4j, please add slf4j-log4j12-1.7.26.jar and log4j-1.2.17.jar to the classpath.

There are no additional jar files required by Esper for using Esper with JSON-formatted event documents.

Optionally, for using Apache Avro, please add esper-common-avro-version.jar to the classpath.

Optionally, for using XML XSD schemas for event types, please add esper-common-xmlxsd-version.jar to the classpath.

Your application can register an event type to instruct the compiler what the input events look like. When compiling modules the compiler checks the available event type information to determine that the module is valid.

This example assumes that there is a Java class PersonEvent and each instance of the PersonEvent class is an event.

Tip

It is not necessary to create classes for each event type.

It is not necessary to preconfigure each event type. It is not necessary to set up a Configuration object.

This step-by-step keeps it simple to get you started.

Our event class for the step-by-step is:

package com.mycompany.myapp;

public class PersonEvent {

private String name;

private int age;

public PersonEvent(String name, int age) {

this.name = name;

this.age = age;

}

public String getName() {

return name;

}

public int getAge() {

return age;

}

}The different event representations are discussed at Section 3.5, “Comparing Event Representations”.

For declaring event types using EPL with create schema, see Section 5.15, “Declaring an Event Type: Create Schema”.

Your application can obtain a compiler calling the getCompiler static method of the EPCompilerProvider class:

EPCompiler compiler = EPCompilerProvider.getCompiler();

The step-by-step provides a Configuration object to the compiler that adds the predefined person event:

Configuration configuration = new Configuration(); configuration.getCommon().addEventType(PersonEvent.class);

The sample module for this getting-started section simply has one statement that selects the name and the age of each arriving person event.

It specifies a statement name using the @name annotation and assigns a name my-statement to the statement.

@name('my-statement') select name, age from PersonEvent

Compile a module by using the compile method passing the configuration as part of the compiler arguments:

CompilerArguments args = new CompilerArguments(configuration);

EPCompiled epCompiled;

try {

epCompiled = compiler.compile("@name('my-statement') select name, age from PersonEvent", args);

}

catch (EPCompileException ex) {

// handle exception here

throw new RuntimeException(ex);

}

Upon compiling this module, the compiler verifies that PersonEvent exists since it is listed in the from-clause.

The compiler also verifies that the name and age properties are available for the PersonEvent since they are listed in the select-clause.

The compiler generates byte code for extracting property values and producing output events.

The compiler builds internal data structures for later use by filter indexes to ensure that when a PersonEvent comes in it will be processed fast.

More information on the compile API can be found at Chapter 15, Compiler Reference and the JavaDoc.

Please add the Esper common jar file, the runtime jar file and the runtime dependencies to the classpath of the program that will be executing compiled modules. The runtime jar file is not required for the compiler.

Common jar file

esper-common-version.jarRuntime jar file

esper-runtime-version.jarSLF4J logging library

slf4j-api-1.7.25.jar

Optionally, for logging using Log4j, please add slf4j-log4j12-1.7.25.jar and log4j-1.2.17.jar to the classpath.

There are no additional jar files required by Esper for using Esper with JSON-formatted event documents.

Optionally, for using Apache Avro, please add esper-common-avro-version.jar to the classpath.

Optionally, for using XML XSD schemas for event types, please add esper-common-xmlxsd-version.jar to the classpath.

The step-by-step provides a Configuration object to the runtime that adds the predefined person event:

Configuration configuration = new Configuration(); configuration.getCommon().addEventType(PersonEvent.class);

Tip

It is not necessary to preconfigure each event type. It is not necessary to set up a Configuration object.

For this example however since the compiler knows PersonEvent as a predefined type and it must this be preconfigured for the runtime as well.

Your application can obtain a runtime by calling the getDefaultRuntime static method of the EPRuntimeProvider class and passing the configuration:

EPRuntime runtime = EPRuntimeProvider.getDefaultRuntime(configuration);

More information about the runtime can be found at Chapter 16, Runtime Reference and the JavaDoc.

More information about configuration can be found at Chapter 17, Configuration and the JavaDoc.

Your application can deploy a compiled module using the deploy method of the administrative interface.

The API calls are:

EPDeployment deployment;

try {

deployment = runtime.getDeploymentService().deploy(epCompiled);

}

catch (EPDeployException ex) {

// handle exception here

throw new RuntimeException(ex);

}

As part of deployment, the runtime verifies that all module dependencies, such as event types, do indeed exist.

During deployment the runtime adds entries to filter indexes to ensure that when a PersonEvent comes in it will be processed fast.

Your application can attach a callback to the EPStatement to receive statement results. The following sample callback simply prints name and age:

EPStatement statement = runtime.getDeploymentService().getStatement(deployment.getDeploymentId(), "my-statement");

statement.addListener( (newData, oldData, statement, runtime) -> {

String name = (String) newData[0].get("name");

int age = (int) newData[0].get("age");

System.out.println(String.format("Name: %s, Age: %d", name, age));

});Your application can provide different kinds of callbacks, see Table 16.2, “Choices For Receiving Statement Results”.

Your application can send events into the runtime using the sendEventBean method (or other sendEvent method matching your choice of event) that is part of the runtime interface:

runtime.getEventService().sendEventBean(new PersonEvent("Peter", 10), "PersonEvent");The output you should see is:

Name: Peter, Age: 10

Upon sending the PersonEvent event object to the runtime, the runtime consults the internally-maintained shared filter index tree structure to determine if any statement is interested in PersonEvent events.

The statement that was deployed as part of this example has PersonEvent in the from-clause, thus the runtime delegates processing of such events to the statement.

The compiled bytecode obtains the name and age properties by calling the getName and getAge methods.

The compiler and runtime both require the following 3rd-party libraries:

SLF4J is a logging API that can work together with LOG4J and other logging APIs. While SLF4J is required, the LOG4J log component is not required and can be replaced with other loggers. SLF4J is licensed under Apache 2.0 license as provided in

lib/esper_3rdparties.license.

The compiler requires the following 3rd-party libraries for compiling only (and not at runtime):

ANTLR is the parser generator used for parsing and parse tree walking of the pattern and EPL syntax. Credit goes to Terence Parr at http://www.antlr.org. The ANTLR license is a BSD license and is provided in

lib/esper_3rdparties.license. Theantlr-runtimeruntime library is required for runtime.Janino is a small and fast Java compiler. The compiler generates code and compiles generated code using Janino. Janino is licensed under 3-clause New BSD License as provided in

lib/esper_3rdparties.license.

- 2.1. Introduction

- 2.2. Basic Select

- 2.3. Basic Aggregation

- 2.4. Basic Filter

- 2.5. Basic Filter and Aggregation

- 2.6. Basic Data Window

- 2.7. Basic Data Window and Aggregation

- 2.8. Basic Filter, Data Window and Aggregation

- 2.9. Basic Where-Clause

- 2.10. Basic Time Window and Aggregation

- 2.11. Basic Partitioned Statement

- 2.12. Basic Output-Rate-Limited Statement

- 2.13. Basic Partitioned and Output-Rate-Limited Statement

- 2.14. Basic Named Windows and Tables

- 2.15. Basic Aggregated Statement Types

- 2.16. Basic Match-Recognize Patterns

- 2.17. Basic EPL Patterns

- 2.18. Basic Indexes

- 2.19. Basic Null

For NEsper .NET also see Section J.12, “.NET Basic Concepts”.

Statements are continuous queries that analyze events and time and that detect situations.

You interact with Esper by compiling and deploying modules that contain statements, by sending events and advancing time and by receiving output by means of callbacks or by polling for current results.

Table 2.1. Interacting With Esper

| What | How |

|---|---|

| EPL | First, compile and deploy statements, please refer to Chapter 5, EPL Reference: Clauses,Chapter 15, Compiler Reference and Chapter 16, Runtime Reference. |

| Callbacks | Second, attach executable code that your application provides to receive output, please refer to Table 16.2, “Choices For Receiving Statement Results”. |

| Events | Next, send events using the runtime API, please refer to Section 16.6, “Processing Events and Time Using EPEventService”. |

| Time | Next, advance time using the runtime API or system time, please refer to Section 16.9, “Controlling Time-Keeping”. |

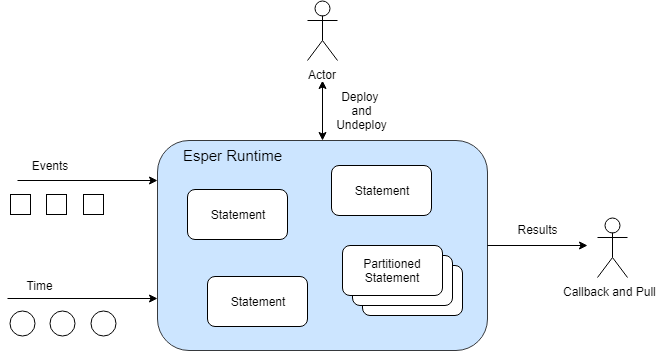

The runtime contains statements like so:

Statements can be partitioned. A partitioned statement can have multiple partitions. For example, there could be partition for each room in a building. For a building with 10 rooms you could have one statement that has 10 partitions. Please refer to Chapter 4, Context and Context Partitions.

A statement that is not partitioned implicitly has one partition. Upon deploying the un-partitioned statement the runtime allocates the single partition. Upon undeploying the un-partitioned statement the runtime destroys the partition.

A partition (or context partition) is where the runtime keeps the state. In the picture above there are three un-partitioned statement and one partitioned statement that has three partitions.

The next sections discuss various easily-understood statements.

The sections illustrate how statements behave, the information that the runtime passes to callbacks (the output) and what information the runtime remembers for statements (the state, all state lives in a partition).

The sample statements assume an event type by name Withdrawal that has account and amount properties.

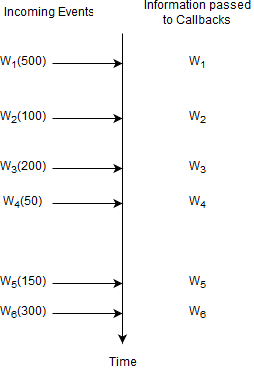

This statement selects all Withdrawal events.

select * from Withdrawal

Upon a new Withdrawal event arriving, the runtime passes the arriving event, unchanged and the same object reference, to callbacks.

After that the runtime effectively forgets the current event.

The diagram below shows a series of Withdrawal events (1 to 6) arriving over time.

In the picture the Wn stands for a specific Withdrawal event arriving. The number in parenthesis is the withdrawal amount.

For this statement, the runtime remembers no information and does not remember any events. A statement where the runtime does not need to remember any information at all is a statement without state (a stateless statement).

The term insert stream is a name for the stream of new events that are arriving. The insert stream in this example is the stream of arriving Withdrawal events.

An aggregation function is a function that groups multiple events together to form a single value. Please find more information at Section 10.2, “Aggregation Functions”.

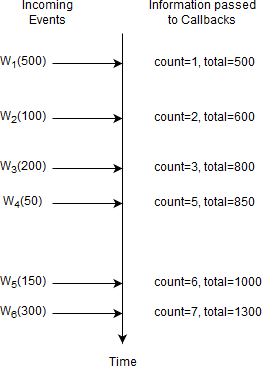

This statement selects a count and a total amount of all Withdrawal events.

select count(*), sum(amount) from Withdrawal

Upon a new Withdrawal event arriving, the runtime increments the count and adds the amount to a running total. It passes the new count and total to callbacks.

After that the runtime effectively forgets the current event and does not remember any events at all, but does remember the current count and total.

Here, the runtime only remembers the current number of events and the total amount.

The count is a single long-type value and the total is a single double-type value (assuming amount is a double-value, the total can be BigDecimal as applicable).

This statement is not stateless and the state consists of a long-typed value and a double-typed value.

Upon a new Withdrawal event arriving, the runtime increases the count by one and adds the amount to the running total. the runtime does not re-compute the count and total because it does not remember events.

In general, the runtime does not re-compute aggregations (unless otherwise indicated). Instead, the runtime adds (increments, enters, accumulates) data to aggregation state and subtracts (decrements, removes, reduces, decreases) from aggregation state.

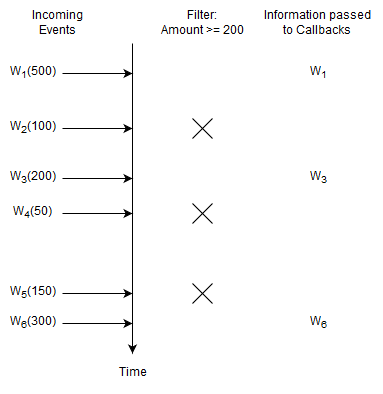

Place filter expressions in parenthesis after the event type name. For further information see Section 5.4.1, “Filter-Based Event Streams”.

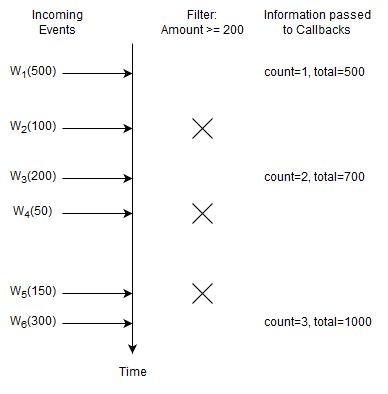

This statement selects Withdrawal events that have an amount of 200 or higher:

select * from Withdrawal(amount >= 200)

Upon a new Withdrawal event with an amount of 200 or higher arriving, the runtime passes the arriving event to callbacks.

For this statement, the runtime remembers no information and does not remember any events.

You may ask what happens for Withdrawal events with an amount of less than 200. The answer is that the statement itself does not even see such events. This is because the runtime knows to discard such events right away

and the statement does not even know about such events. The runtime discards unneeded events very fast enabled by statement analysis, planning and suitable data structures.

This statement selects the count and the total amount for Withdrawal events that have an amount of 200 or higher:

select count(*), sum(amount) from Withdrawal(amount >= 200)

Upon a new Withdrawal event with an amount of 200 or higher arriving, the runtime increments the count and adds the amount to the running total. The runtime passes the count and total to callbacks.

In this example the runtime only remembers the count and total and again does not remember events. The runtime discards Withdrawal events with an amount of less than 200.

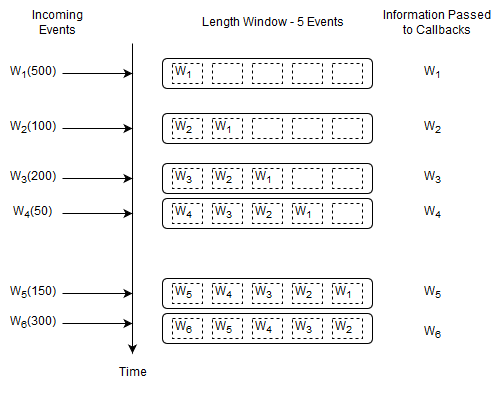

A data window, or window for short, retains events for the purpose of aggregation, join, match-recognize patterns, subqueries, iterating via API and output-snapshot. A data window defines which subset of events to retain. For example, a length window keeps the last N events and a time window keeps the last N seconds of events. See Chapter 14, EPL Reference: Data Windows for details.

This statement selects all Withdrawal events and instructs the runtime to remember the last five events.

select * from Withdrawal#length(5)

Upon a new Withdrawal event arriving, the runtime adds the event to the length window. It also passes the same event to callbacks.

Upon arrival of event W6, event W1 leaves the length window. We use the term expires to say that an event leaves a data window. We use the term remove stream to describe the stream of events leaving a data window.

The runtime remembers up to five events in total (the last five events). At the start of the statement the data window is empty. By itself, keeping the last five events may not sound useful. But in connection with a join, subquery or match-recognize pattern for example a data window tells the runtime which events you want to query.

Note

By default the runtime only delivers the insert stream to listeners and observers. EPL supports optionalistream, irstream and rstream keywords for select- and insert-into clauses to control which streams to deliver, see Section 5.3.7, “Selecting Insert and Remove Stream Events”.

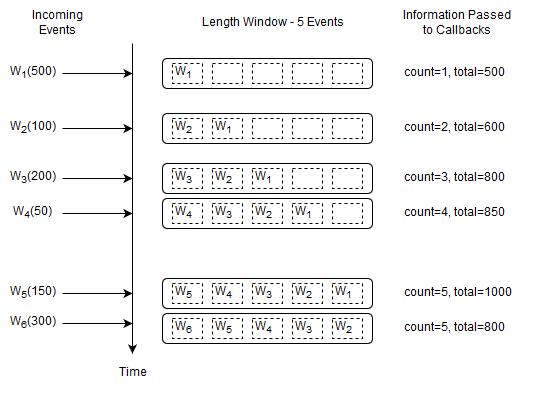

This statement outputs the count and total of the last five Withdrawal events.

select count(*), sum(amount) from Withdrawal#length(5)

Upon a new Withdrawal event arriving, the runtime adds the event to the length window, increases the count by one and adds the amount to the current total amount.

Upon a Withdrawal event leaving the data window, the runtime decreases the count by one and subtracts its amount from the current total amount.

It passes the running count and total to callbacks.

Before the arrival of event W6 the current count is five and the running total amount is 1000. Upon arrival of event W6 the following takes place:

The runtime determines that event W1 leaves the length window.

To account for the new event W6, the runtime increases the count by one and adds 300 to the running total amount.

To account for the expiring event W1, the runtime decreases the count by one and subtracts 500 from the running total amount.

The output is a count of five and a total of 800 as a result of

1000 + 300 - 500.

The runtime adds (increments, enters, accumulates) insert stream events into aggregation state and subtracts (decrements, removes, reduces, decreases) remove stream events from aggregation state. It thus maintains aggregation state in an incremental fashion.

For this statement, once the count reaches 5, the count will always remain at 5.

The information that the runtime remembers for this statement is the last five events and the current long-typed count and double-typed total.

Tip

Use the irstream keyword to receive both the current as well as the previous aggregation value for aggregating statements.

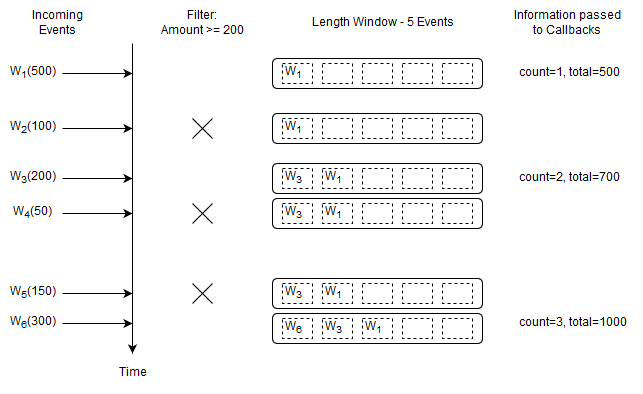

The following statement outputs the count and total of the last five Withdrawal events considering only those Withdrawal events that have an amount of at least 200:

select count(*), sum(amount) from Withdrawal(amount>=200)#length(5)

Upon a new Withdrawal event arriving, and only if that Withdrawal event has an amount of 200 or more, the runtime adds the event to the length window, increases the count by one and adds the amount to the current total amount.

Upon a Withdrawal event leaving the data window, the runtime decreases the count by one and subtracts its amount from the current total amount.

It passes the running count and total to callbacks.

For statements without a data window, the where-clause behaves the same as the filter expressions that are placed in parenthesis.

The following two statements are fully equivalent because of the absence of a data window (the .... means any select-clause expressions):

select .... from Withdrawal(amount > 200) // equivalent to select .... from Withdrawal where amount > 200

Note

In EPL, the where-clause is typically used for correlation in a join or subquery. Filter expressions should be placed right after the event type name in parenthesis.

The next statement applies a where-clause to Withdrawal events. Where-clauses are discussed in more detail in Section 5.5, “Specifying Search Conditions: The Where Clause”.

select * from Withdrawal#length(5) where amount >= 200

The where-clause applies to both new events and expiring events. Only events that pass the where-clause are passed to callbacks.

A time window is a data window that extends the specified time interval into the past. More information on time windows can be found at Section 14.3.3, “Time Window (time or win:time)”.

The next statement selects the count and total amount of Withdrawal events considering the last four seconds of events.

select count(*), sum(amount) as total from Withdrawal#time(4)

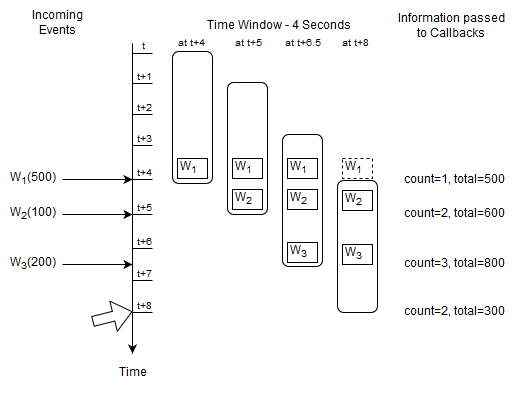

The diagram starts at a given time t and displays the contents of the time window at t + 4 and t + 5 seconds and so on.

The activity as illustrated by the diagram:

At time

t + 4 secondsan eventW1arrives and the output is a count of one and a total of 500.At time

t + 5 secondsan eventW2arrives and the output is a count of two and a total of 600.At time

t + 6.5 secondsan eventW3arrives and the output is a count of three and a total of 800.At time

t + 8 secondseventW1expires and the output is a count of two and a total of 300.

For this statement the runtime remembers the last four seconds of Withdrawal events as well as the long-typed count and the double-typed total amount.

Tip

Time can have a millisecond or microsecond resolution.

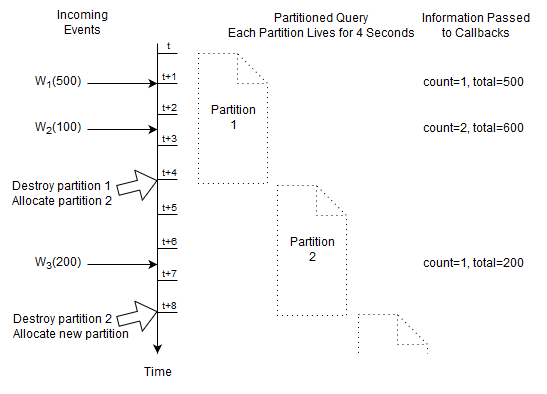

The statements discussed so far are not partitioned. A statement that is not partitioned implicitly has one partition. Upon deploying the un-partitioned statement the runtime allocates the single partition and it destroys the partition when your application undeploys the statement.

A partitioned statement is handy for batch processing, sessions, resetting and start/stop of your analysis. For partitioned statements you must specify a context. A context defines how partitions are allocated and destroyed. Additional information about partitioned statements and contexts can be found at Chapter 4, Context and Context Partitions.

We shall have a single partition that starts immediately and ends after four seconds:

create context Batch4Seconds start @now end after 4 sec

The next statement selects the count and total amount of Withdrawal events that arrived since the last reset (resets are at t, t+4, t+8 as so on), resetting each four seconds:

context Batch4Seconds select count(*), total(amount) from Withdrawal

At time t + 4 seconds and t + 8 seconds the runtime destroys the current partition. This discards the current count and running total.

The runtime immediately allocates a new partition and the count and total start fresh at zero.

For this statement the runtime only remembers the count and running total, and the fact how long a partition lives.

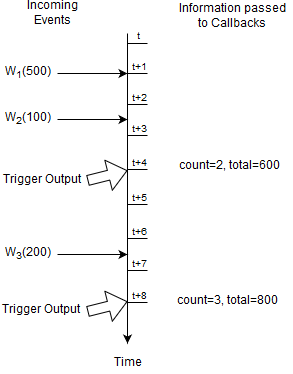

All the previous statements had continuous output. In other words, in each of previous statements output occurred as a result of a new event arriving. Use output rate limiting to output when a condition occurs, as described in Section 5.7, “Stabilizing and Controlling Output: The Output Clause”.

The next statement outputs the last count and total of all Withdrawal events every four seconds:

select count(*), total(amount) from Withdrawal output last every 4 seconds

At time t + 4 seconds and t + 8 seconds the runtime outputs the last aggregation values to callbacks.

For this statement the runtime only remembers the count and running total, and the fact when output shall occur.

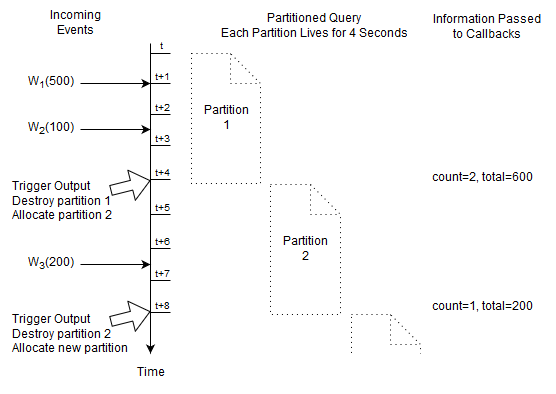

Use a partitioned statement with output rate limiting to output-and-reset. This allows you to form batches, analyze a batch and then forget all such state in respect to that batch, continuing with the next batch.

The next statement selects the count and total amount of Withdrawal events that arrived within the last four seconds at the end of four seconds, resetting after output:

create context Batch4Seconds start @now end after 4 sec

context Batch4Seconds select count(*), total(amount) from Withdrawal output last when terminated

At time t + 4 seconds and t + 8 seconds the runtime outputs the last aggregation values to callbacks, and resets the current count and total.

For this statement the runtime only remembers the count and running total, and the fact when the output shall occur and how long a partition lives.

Named windows manage a subset of events for use by other statements. They can be selected-from, inserted- into, deleted-from and updated by multiple statements.

Tables are similar to named windows but are organized by primary keys and hold rows and columns. Tables can share aggregation state while named windows only share the subset of events they manage.

The documentation link for both is Chapter 6, EPL Reference: Named Windows and Tables. Named windows and tables can be queried with fire-and-forget queries through the API and also the inward-facing JDBC driver.

Named windows declare a window for holding events, and other statements that have the named window name in the from-clause implicitly aggregate or analyze the same set of events.

This removes the need to declare the same window multiple times for different EPL statements.

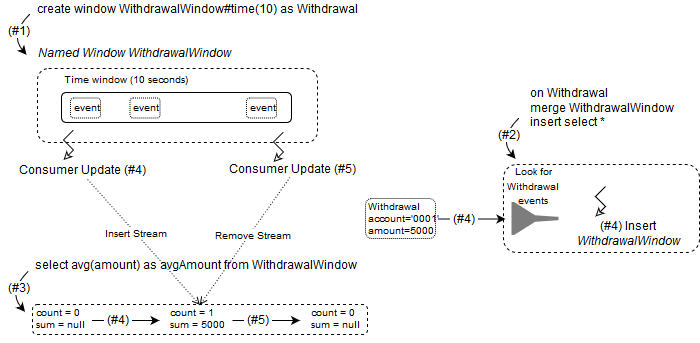

The below drawing explains how named windows work.

The step #1 creates a named window like so:

create window WithdrawalWindow#time(10) as Withdrawal

The name of the named window is WithdrawalWindow and it will be holding the last 10 seconds of Withdrawal events (#time(10) as Withdrawal).

As a result of step #1 the runtime allocates a named window to hold 10 seconds of Withdrawal events. In the drawing the named window is filled with some events. Normally a named window starts out as an empty window however it looks nicer with some boxes already inside.

The step #2 creates an EPL statement to insert into the named window:

on Withdrawal merge WithdrawalWindow insert select *

This tells the runtime that on arrival of a Withdrawal event it must merge with the WithdrawalWindow and insert the event. The runtime now waits for Withdrawal events to arrive.

The step #3 creates an EPL statement that computes the average withdrawal amount of the subset of events as controlled by the named window:

select avg(amount) as avgAmount from WithdrawalWindow

As a result of step #3 the runtime allocates state to keep a current average. The state consists of a count field and a sum field to compute a running average.

It determines that the named window is currently empty and sets the count to zero and the sum to null (if the named window was filled already it would determine the count and sum by iterating).

Internally, it also registers a consumer callback with the named window to receive inserted and removed events (the insert and remove stream). The callbacks are shown in the drawing as a dotted line.

In step #4 assume a Withdrawal event arrives that has an account number of 0001 and an amount of 5000.

The runtime executes the on Withdrawal merge WithdrawalWindow insert select * and thus adds the event to the time window.

The runtime invokes the insert stream callback for all consumers (dotted line, internally managed callback).

The consumer that computes the average amount receives the callback and the newly-inserted event. It increases the count field by one and increases the sum field by 5000.

The output of the statement is avgAmount as 5000.

In step #5, which occurs 10 seconds after step #4, the Withdrawal event for account 0001 and amount 5000 leaves the time window.

The runtime invokes the remove stream callback for all consumers (dotted line, internally managed callback).

The consumer that computes the average amount receives the callback and the newly-removed event. It decreases the count field by one and sets the sum to null and the count is zero.

The output of the statement is avgAmount as null.

Tables in EPL are not just holders of values of some type. EPL tables are also holders for aggregation state. Aggregations in EPL can be simple aggregations, such as count or average or standard deviation, but can also be much richer

aggregations. Examples of richer aggregations are list of events (window and sorted aggregation) or a count-min-sketch (a set of hash tables that store approximations). Your application can easily extend

and provide its own aggregations.

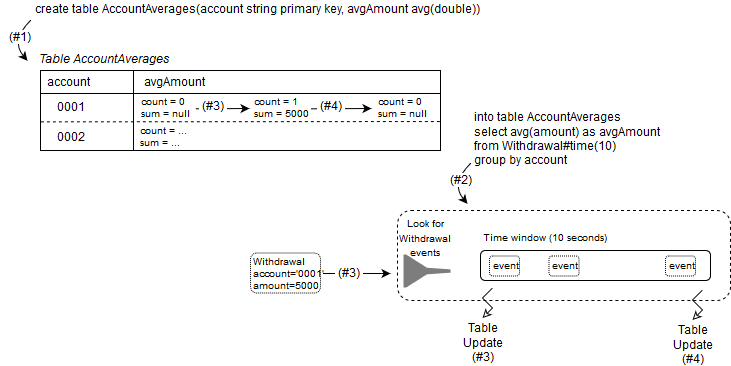

As table columns can serve as holders for aggregation state, they are a central place for updating and accessing aggregation state to be shared between statements. The below drawing explains how tables work with aggregation state.

The step #1 creates a table like so:

create table AccountAverages(account string primary key, avgAmount avg(double))

The table that has string-typed account number as the primary key. The table also has a column that contains an average of double-type values. Note how create table does not need to know how the average gets updated,

it only needs to know that the average is an average of double-type values.

As a result of step #1 the runtime allocates a table. In the drawing the table is filled with two rows for two different account numbers 0001 and 0002. Normally a table starts out as an empty table but let's assume the table has rows already.

In order to store an average of double-type values, the runtime must keep a count and a sum. Therefore in the avgAmount column of the table there are fields for count and sum.

For account 0001 let's say there are currently no values and the count is zero and the sum is null.

The step #2 creates an EPL statement that aggregates the last 10 seconds of Withdrawal events:

into table AccountAverages select avg(amount) as avgAmount from Withdrawal#time(10) group by account

The into table tells the compiler to store aggregations not locally as part of the statement, but into the AccountAverages table instead. The as avgAmount tells the compiler to use the column avgAmount in the table.

The compiler checks that aggregation type and value types match with the table column, and that the group by-clause matches the table primary key.

The runtime looks for Withdrawal events and keeps a 10-second time window. Normally a time window starts out as an empty time window but the drawing shows a few events in the time window.

In step #3 assume a Withdrawal event arrives that has an account number of 0001 and an amount of 5000. The runtime adds the event to the time window.

The runtime updates the avgAmount column of the table specifically the two fields count and sum. It increases the count field by one and increases the sum field by 5000.

In the case when a row for the account number does not exist, the runtime allocates a table row and its columns and aggregation fields.

In step #4, which occurs 10 seconds after step #3, the Withdrawal event for account 0001 and amount 5000 leaves the time window.

The runtime updates the avgAmount column of the table. It decreases the count field by one and sets the sum to null as the count is zero.

Other EPL statements may access table columns by putting the table into a from-clause, or by table-access-expression, on-action statement or fire-and-forget query.

The expressions in the select-clause, the use of aggregation functions and the group-by-clause are relevant to statement design. The overview herein is especially relevant to joins, on-trigger, output-rate-limiting and batch data windows.

If your statement only selects aggregation values, the runtime outputs one row (or zero rows in a join).

Without a group-by clause, if your statement selects non-aggregated values along with aggregation values, the runtime outputs a row per event.

With a group-by clause, if your statement selects non-aggregated values that are all in the group-by-clause, the runtime outputs a row per group.

With a group-by clause, if your statement selects non-aggregated values and not all non-aggregated values are in the group-by-clause, the runtime outputs a row per event.

EPL allows each aggregation function to specify its own grouping criteria. Please find further information in Section 5.6.4, “Specifying Grouping for Each Aggregation Function”. The documentation provides output examples for statement types in Appendix A, Output Reference and Samples, and the next sections outlines each statement type.

The examples below assume BankInformationWindow is a named window defined elsewhere.

The examples use a join to illustrate. Joins are further described in Section 5.12, “Joining Event Streams”.

An example statement for the un-aggregated and un-grouped case is as follows:

select * from Withdrawal unidirectional, BankInformationWindow

Upon a Withdrawal event coming in, the number of output rows is the number of rows in the BankInformationWindow.

The appendix provides a complete example including input and output events over time at Section A.2, “Output for Un-Aggregated and Un-Grouped Statements”.

If your statement only selects aggregation values and does not group, your statement may look as the example below:

select sum(amount) from Withdrawal unidirectional, BankInformationWindow

Upon a Withdrawal event coming in, the number of output rows is always zero or one.

The appendix provides a complete example including input and output events over time at Section A.3, “Output for Fully-Aggregated and Un-Grouped Statements”.

If any aggregation functions specify the group_by parameter and a dimension, for example sum(amount, group_by:account),

the statement executes as an aggregated and grouped statement instead.

If your statement selects non-aggregated properties and aggregation values, and does not group, your statement may be similar to this statement:

select account, sum(amount) from Withdrawal unidirectional, BankInformationWindow

Upon a Withdrawal event coming in, the number of output rows is the number of rows in the BankInformationWindow.

The appendix provides a complete example including input and output events over time at Section A.4, “Output for Aggregated and Un-Grouped Statements”.

If your statement selects aggregation values and all non-aggregated properties in the select clause are listed in the group by clause, then your statement may look similar to this example:

select account, sum(amount) from Withdrawal unidirectional, BankInformationWindow group by account

Upon a Withdrawal event coming in, the number of output rows is one row per unique account number.

The appendix provides a complete example including input and output events over time at Section A.5, “Output for Fully-Aggregated and Grouped Statements”.

If any aggregation functions specify the group_by parameter and a dimension other than group by dimension(s),

for example sum(amount, group_by:accountCategory), the statement executes as an aggregated and grouped statement instead.

If your statement selects non-aggregated properties and aggregation values, and groups only some properties using the group by clause, your statement may look as below:

select account, accountName, sum(amount) from Withdrawal unidirectional, BankInformationWindow group by account

Upon a Withdrawal event coming in, the number of output rows is the number of rows in the BankInformationWindow.

The appendix provides a complete example including input and output events over time at Section A.6, “Output for Aggregated and Grouped Statements”.

EPL offers the standardized match-recognize syntax for finding patterns among events. A match-recognize pattern is very similar to a regular-expression pattern.

The below statement is a sample match-recognize pattern. It detects a pattern that may be present in the events held by the named window as declared above. It looks for two immediately-followed events, i.e. with no events in-between for the same origin. The first of the two events must have high priority and the second of the two events must have medium priority.

select * from AlertNamedWindow

match_recognize (

partition by origin

measures a1.origin as origin, a1.alarmNumber as alarmNumber1, a2.alarmNumber as alarmNumber2

pattern (a1 a2)

define

a1 as a1.priority = 'high',

a2 as a2.priority = 'medium'

)The EPL pattern language is a versatile and expressive syntax for finding time and property relationships between events of many streams.

Event patterns match when an event or multiple events occur that match the pattern's definition, in a bottom-up fashion. Pattern expressions can consist of filter expressions combined with pattern operators. Expressions can contain further nested pattern expressions by including the nested expression(s) in parenthesis.

There are five types of operators:

Operators that control pattern finder creation and termination:

everyLogical operators:

and,or,notTemporal operators that operate on event order:

->(the followed-by operator)Guards are where-conditions that cause termination of pattern subexpressions, such as

timer:withinObservers that observe time events, such as

timer:interval(an interval observer),timer:at(a crontab-like observer)

A sample pattern that alerts on each IBM stock tick with a price greater than 80 and within the next 60 seconds:

every StockTickEvent(symbol="IBM", price>80) where timer:within(60 seconds)

A sample pattern that alerts every five minutes past the hour:

every timer:at(5, *, *, *, *)

A sample pattern that alerts when event A occurs, followed by either event B or event C:

A -> ( B or C)

A pattern where a property of a following event must match a property from the first event:

every a=EventX -> every b=EventY(objectID=a.objectID)

The compliler and runtime, depending on the statements, plan, build and maintain two kinds of indexes: filter indexes and event indexes.

The runtime builds and maintains indexes for efficiency so as to achieve good performance.

The following table compares the two kinds of indexes:

Table 2.2. Kinds of Indexes

| Filter Indexes | Event Indexes | |

|---|---|---|

| Improve the speed of | Matching incoming events to currently-active filters that shall process the event | Lookup of rows |

| Similar to | A structured registry of callbacks; or content-based routing | Database index |

| Index stores values of | Values provided by expressions | Values for certain column(s) |

| Index points to | Currently-active filters | Rows |

| Comparable to | A sieve or a switchboard | An index in a book |

Filter indexes organize filters so that they can be searched efficiently. Filter indexes link back to the statement and partition that the filter(s) come from.

We use the term filter or filter criteria to mean the selection predicate, such as symbol=“google” and price > 200 and volume > 111000.

Statements provide filter criteria in the from-clause, and/or in EPL patterns and/or in context declarations.

Please see Section 5.4.1, “Filter-Based Event Streams”, Section 7.4, “Filter Expressions in Patterns” and Section 4.2.7.1, “Filter Context Condition”.

Filter index building is a result of the compiler analyzing the filter expressions as described in Section 15.18, “Compiler Filter Expression Analysis”. The runtime uses the compiler output to build, maintain and use filter indexes.

Big-O notation scaling information can be found at Section 24.1.1, “Big-O Complexity of Matching Events to Statements and Context Partitions”.

When the runtime receives an event, it consults the filter indexes to determine which statements, if any, must process the event.

The purpose of filter indexes is to enable:

Efficient matching of events to only those statements that need them.

Efficient discarding of events that are not needed by any statement.

Efficient evaluation with best case approximately O(1) to O(log n) i.e. in the best case executes in approximately the same time regardless of the size of the input data set which is the number of active filters.

The runtime builds and maintains separate sets of filter indexes per event type, when such event type occurs in the from-clause or pattern.

Filter indexes are sharable within the same event type filter. Thus various from-clauses and patterns that refer for the same event type can contribute to the same set of filter indexes.

The runtime builds filter indexes in a nested fashion: Filter indexes may contain further filter indexes, forming a tree-like structure, a filter index tree. The nesting of indexes is beyond the introductory discussion provided here.

The from-clause in a statement and, in special cases, also the where-clause provide filter criteria that the compiler analyzes and for which it builds filter indexes.

For example, assume the WithdrawalEvent has an account field. You could create three statements like so:

@name('A') select * from WithdrawalEvent(account = 1)@name('B') select * from WithdrawalEvent(account = 1)@name('C') select * from WithdrawalEvent(account = 2)

In this example, both statement A and statement B register interest in WithdrawalEvent events that have an account value of 1.

Statement C registers interest in WithdrawalEvent events that have an account value of 2.

The below table is a sample filter index for the three statements:

Table 2.3. Sample Filter Index Multi-Statement Example

Value of account | Filter |

|---|---|

1 | Statement A, Statement B |

2 | Statement C |

When a Withdrawal event arrives, the runtime extracts the account and performs a lookup into above table.

If there are no matching rows in the table, for example when the account is 3, the runtime knows that there is no further processing for the event.

For filter index planning, we use the term lookupable-expression to mean the expression providing the filter index lookup value.

In this example there is only one lookupable-expression and that is account.

We use the term value-expression to mean the expression providing the indexed value.

Here there are three value-expressions namely 1 (from statement A), 1 (from statement B) and 2 (from statement C).

We use the term filter-index-operator to mean the type of index such as equals(=), relational (<,>,<=, >=) etc..

As part of a pattern you may specify event types and filter criteria. The compiler analyzes patterns and determines filter criteria for filter index building.

Consider the following example pattern that fires for each WithdrawalEvent that is followed by another WithdrawalEvent for the same account value:

@name('P') select * from pattern [every w1=WithdrawalEvent -> w2=WithdrawalEvent(account = w.account)]

Upon creating the above statement, the runtime starts looking for WithdrawalEvent events. At this time there is only one active filter:

A filter looking for

WithdrawalEventevents regardless of account id.

Assume a WithdrawalEvent Wa for account 1 arrives. The runtime then activates a filter looking for another WithdrawalEvent for account 1.

At this time there are two active filters:

A filter looking for

WithdrawalEventevents regardless of account id.A filter looking for

WithdrawalEvent(account=1)associated tow1=Wa.

Assume another WithdrawalEvent Wb for account 1 arrives. The runtime then activates a filter looking for another WithdrawalEvent for account 1.

At this time there are three active filters:

A filter looking for

WithdrawalEventevents regardless of account id.A filter looking for

WithdrawalEvent(account=1)associated tow1=Wa.A filter looking for

WithdrawalEvent(account=1)associated tow2=Wb.

Assume another WithdrawalEvent Wc for account 2 arrives. The runtime then activates a filter looking for another WithdrawalEvent for account 2.

At this time there are four active filters:

A filter looking for

WithdrawalEventevents regardless of account id.A filter looking for

WithdrawalEvent(account=1)associated tow1=Wa.A filter looking for

WithdrawalEvent(account=1)associated tow1=Wb.A filter looking for

WithdrawalEvent(account=2)associated tow1=Wc.

The below table is a sample filter index for the pattern after the Wa, Wband Wc events arrived:

Table 2.4. Sample Filter Index Pattern Example

Value of account | Filter |

|---|---|

1 | Statement P Pattern w1=Wa, Statement P Pattern w1=Wb |

2 | Statement P Pattern w1=Wc |

When a Withdrawal event arrives, the runtime extracts the account and performs a lookup into above table.

If a matching row is found, the runtime can hand off the event to the relevant pattern subexpressions.

This example is similar to the previous example of multiple statements, but instead it declares a context and associates a single statement to the context.

For example, assume the LoginEvent has an account field. You could declare a context initiated by a LoginEvent for a user:

@name('A') create context UserSession initiated by LoginEvent as loginEvent